Overview

In my previous 6 part series of articles which covered getting a Kubernetes cluster stood up and configured with NSX-T (using VMware NCP) I did some basic testing to show how the integration between the two products worked. One of the areas I did not cover was how Kubernetes can control the distributed firewall configuration for namespaces and associated PODs so that’s exactly what I am going to do in this article.

Before we get started I’m going to cover a few basics first .

Kubernetes Definitions and Resources

In order for Kubernetes to create anything (i.e. a Kubernetes resource meaning a Namespace, Deployment, POD, Service etc.) it usually needs to be detailed in a definition, otherwise known as a manifest file (note that some things can be accomplished by just using a single command without a definition file). In part6 of the Kubernetes and NSX-T series I briefly covered a manifest file for creating a load balanced service and PODs which I extensively annotated so check that out as a starting point. You can also go to the API docs for more information.

A definition contains a number of attributes and their associated values which provides Kubernetes the desired state of the resource. They are usually written in yaml format (see here for info on yaml). This manifest file is a very basic example for a deployment (a replica set of PODs and their containers):

apiVersion: apps/v1

kind: Deployment

metadata:

# Unique key of the Deployment instance

name: deployment-example

spec:

# 3 Pods should exist at all times.

replicas: 3

template:

metadata:

labels:

# Apply this label to pods and default

# the Deployment label selector to this value

app: nginx

spec:

containers:

- name: nginx

# Run this image

image: nginx:1.10

Each type of resource has differing requirements so make sure you check out the documentation to validate how a particular resource manifest should look.

Network Policy Resource

In the case of controlling ingress/egress to/from/between Kubernetes PODs (in my case using NSX-T) then a “Network Policy” resource needs to be created with a manifest that details how that policy resource should be configured. When Kubernetes creates the resource the Network Container Plugin orchestrates NSX-T, telling it the rules that are required to be created within the distributed firewall.

Let’s build out a manifest to satisfy the following. I’m going to use the deployment I created from the 6 part series which i called “lb-web-deployment”.

- Restrict access into the “lb-web-deployment” PODs to one IP address (192.168.110.10) on all TCP ports

- Allow the “lb-web-deployment” PODs unrestricted egress access to the 192.168.110.0/24 network for all TCP ports.

To start to work out how you might put this together it is important to know that by applying a network policy to a POD it automatically denies all incoming and outgoing connections unless specifically allowed by the policy. For this reason there is no attribute for denying traffic in a network policy.

Building the Manifest

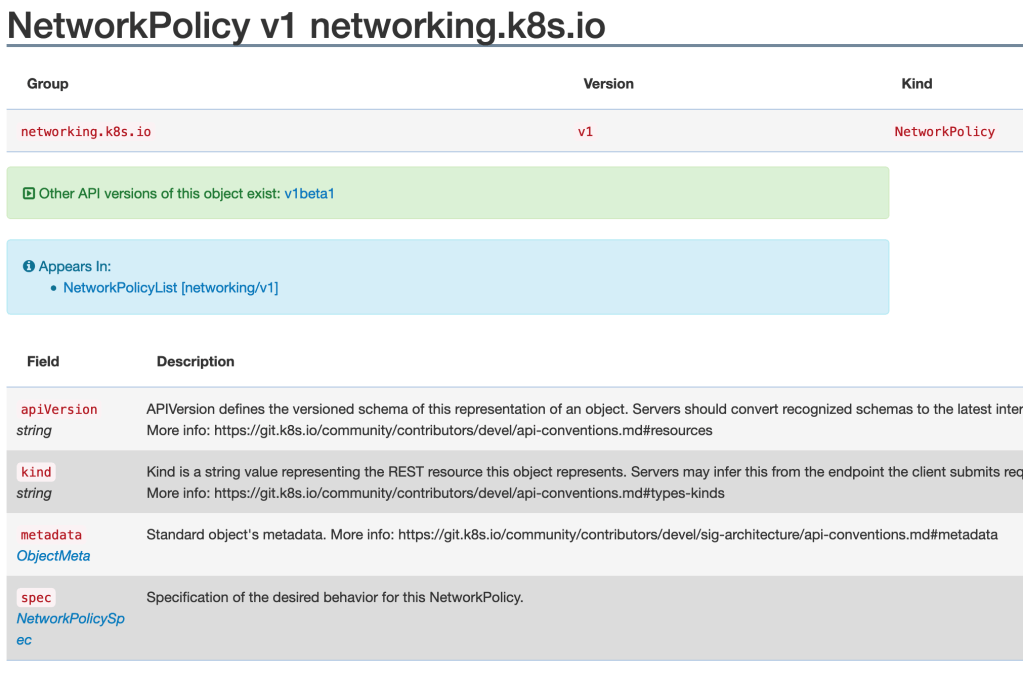

Using the API guide I can start to put together the manifest. I will need 4 fields to start with (apiVersion, kind, metadata and spec) and you will find these are standard for all types of resource.

The values for “apiVersion” and “kind” can be found on the API page (screenshot above). That gives us a starting manifest that looks like this.

apiVersion: networking.k8s.io/v1

kind: NetworkPolicy

metadata:

spec:Next I need to populate “metadata”. This is not just a string value but is a sub-object of type “ObjectMeta” as shown by the field table in the network policy screenshot above. If I click on the object type it should show me what needs to be populated. There are many fields as shown below but the ones I really need are “name” (the name of the network policy to be created) and “namespace” (the namespace the PODs reside in).

Now I have a manifest that looks like this:

apiVersion: networking.k8s.io/v1

kind: NetworkPolicy

metadata:

name: lb-web-deployment

namespace: default

spec:

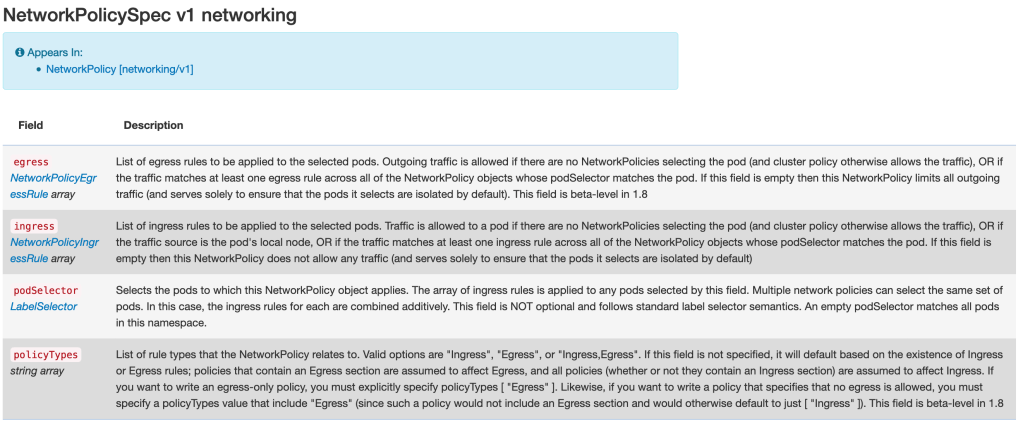

The lions share of the configuration needs to be placed into the “spec”attribute. Going back to the API guide for a network policy and clicking on the “NetworkPolicySpec” link reveals that there are 4 possible attributes that need to be provided (ingress, egress, podSelector and policyTypes).

The manifest can now have those attributes added. These attributes are mostly sub-objects (with the exception of “policyTypes”) so they have no string value and will hold other sub-objects.

apiVersion: networking.k8s.io/v1

kind: NetworkPolicy

metadata:

name: lb-web-deployment

namespace: default

spec:

ingress:

egress:

podSelector:

policyTypes:

Lets deal with “ingress” first. Using the API links shows that ingress is an array with each array member being made up of “from” and “ports” attributes, with “from” also being an array and made up of attributes called “ipBlock”, “nameSpaceSelector” and “podSelector” (all of which are also arrays). The API guide specifies that if I allow ingress via IP then I cannot use namespace selection or POD selection at the same time so I won’t add either of those two attributes to the manifest.

Attributes beneath “ports” can also be expanded using the API guide. The manifest now looks like this:

apiVersion: networking.k8s.io/v1

kind: NetworkPolicy

metadata:

name: lb-web-deployment

namespace: default

spec:

ingress:

- from:

- ipBlock:

ports:

- protocol:

port:

egress:

podSelector:

policyTypes:

Now I need to added the IP address I am going to allow to reach my PODs.

apiVersion: networking.k8s.io/v1

kind: NetworkPolicy

metadata:

name: lb-web-deployment

namespace: default

spec:

ingress:

- from:

- ipBlock:

cidr: 192.168.110.10/32

ports:

- protocol:

port:

egress:

podSelector:

policyTypes:

I want to allow ingress on all TCP ports. The API guide tells me that if I don’t provide a port attribute than all ports are allowed. This makes the manifest looks as follows:

apiVersion: networking.k8s.io/v1

kind: NetworkPolicy

metadata:

name: lb-web-deployment

namespace: default

spec:

ingress:

- from:

- ipBlock:

cidr: 192.168.110.10/32

ports:

- protocol: TCP

egress:

podSelector:

policyTypes:

Following the same methodology through the various attributes of the manifest results in the final manifest.

apiVersion: networking.k8s.io/v1

kind: NetworkPolicy

metadata:

name: lb-web-deployment

namespace: default

spec:

ingress:

- from:

- ipBlock:

cidr: 192.168.110.10/32

ports:

- protocol: TCP

egress:

- to:

- ipBlock:

cidr: 192.168.110.0/24

podSelector:

matchLabels:

app: lb-web-app

policyTypes:

- Ingress

- Egress

Applying the Manifest

I can now create the resource by applying the manifest file using “kubectl” from the Kubernetes master.

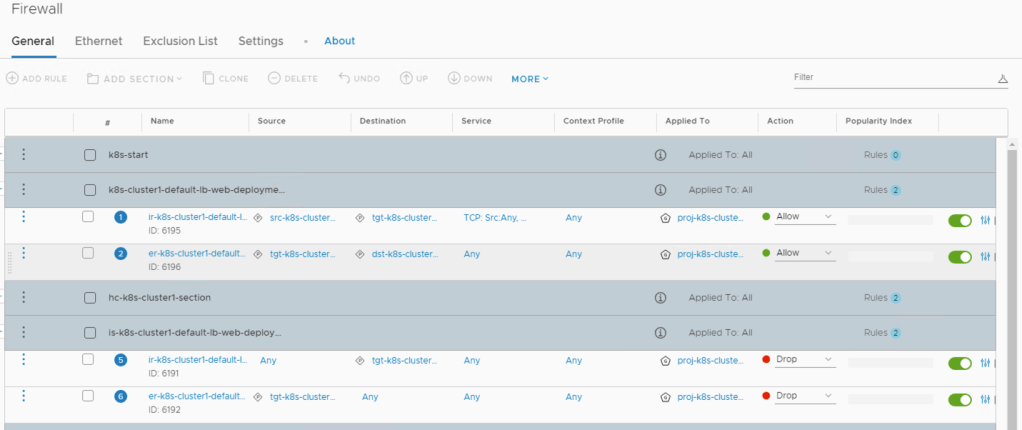

The output of this are two sets of rules created within the distributed firewall. The first set of rules allow traffic in and out of the PODs on the TCP protocol as specified in the manifest file. The second set of rules makes sure that all other traffic in and out of the PODs are denied.

This manifest content can of course be combined with other content for creating deployments etc so that PODs, Services and Firewall Rules can be deployed from applying a single manifest.

Hopefully this gives you an idea of how far things can go!

Pingback: Kubernetes and NSX-T – Firewall Rules with Network Policies | vnuggets - Accidentally Sustainable