Overview

In this article I’m going to cover some interesting functionality we demonstrated to one of our large banking customers to show some DBaaS use cases. The idea behind this was to show the customer what type of manual processes that they are currently performing could be automated via as much OOTB functionality as possible.

In this case we chose to automate MS SQL database provisioning and some day 2 operations however the same principals from this article can be applied to almost anything.

The Use Cases

To show off the lifecycle of a SQL database I chose to implement the following functionality as a mixture of day 1 functionality and day 2 operations.

- Database Creation

- New Local User Creation (and granting role in SQL)

- Add User to SQL and Assign Role

- Database Cloning

- Change Database Ownership

- Database Deletion

I will cover some of these in this article but the approach is the same for all of them.

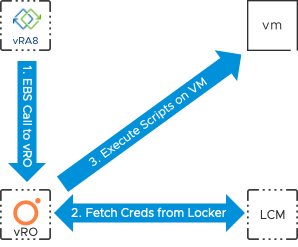

Remote Script Execution

The method I used to implement the use cases was via Remote Script Execution. Essentially this is passing all commands I want to run inside a workload into the guest VM via VMware Tools and asking the system to execute them on our behalf. This is not new functionality as we have had this available within vRO for many versions however the integration with vRA 8 and the use of other components such as vRealize Suite Lifecycle Manager is completely new.

So, I have a method of execution but now I need to make this consumable inside vRA. For this you need to combine vRA Custom Resources with the Remote Script Execution functionality to produce drag and drop components on the vRA Cloud Template (aka a blueprint) canvas that can pass commands to be executed into the guest workloads that are being deployed.

VMware Technical Marketing have produced a version 1.0 Remote Script Execution package for vRealize Orchestrator that builds upon existing functionality within vRO but also draws in new functionality and allows script components to be represented as Dynamic Types and therefore be turned into vRA Custom Resources. With both of these components combined I now have a complete mechanism to implement my use cases.

Finally I need the commands to tell the guest OS what I need to be performed via VMware Tools. In this case I am going to use a combination of:

- PowerShell commands

- Windows OS CLI commands

- SQL unattended installation file

Putting the Pieces Together

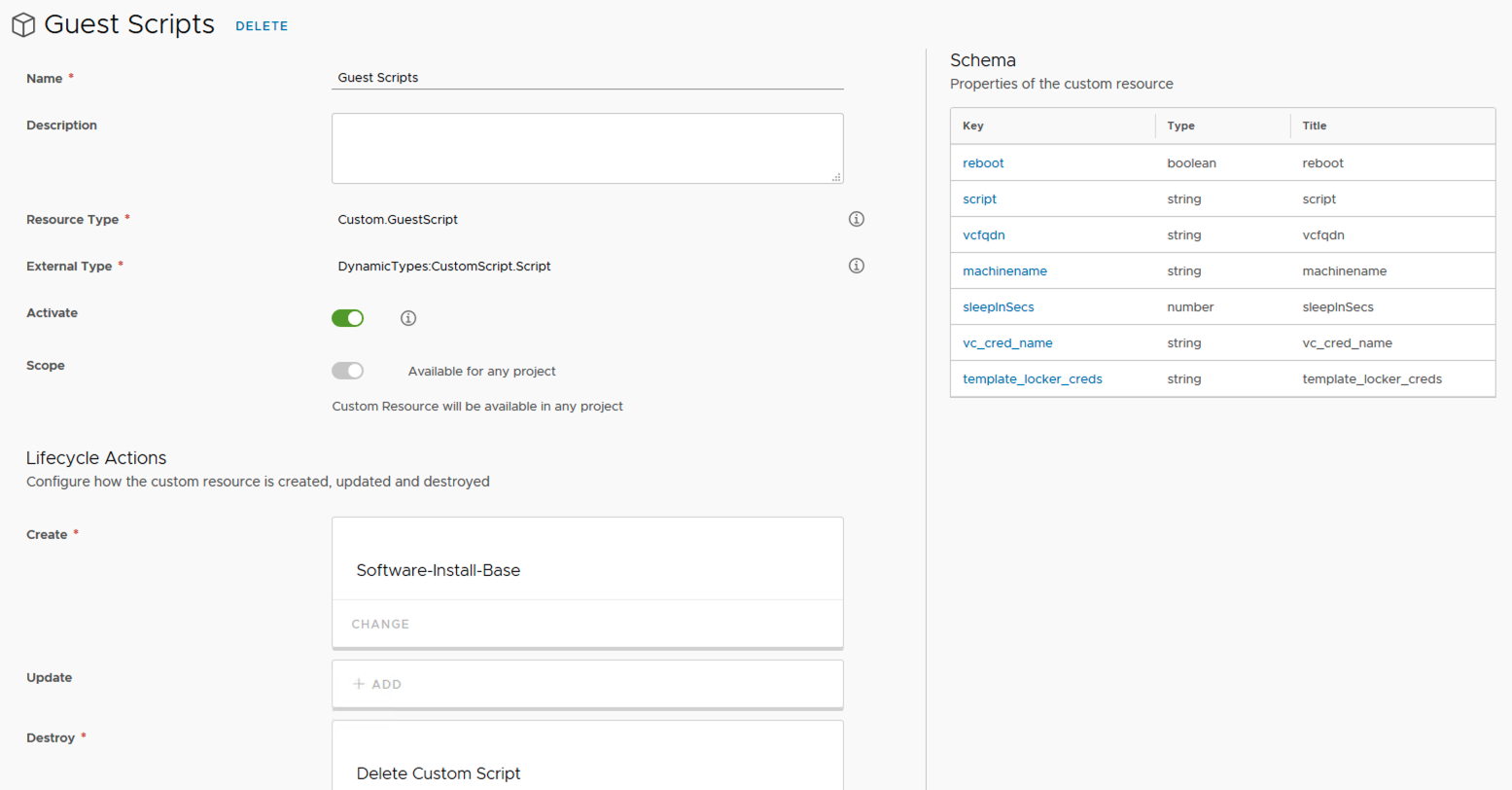

To make the workflows components that can be positioned within a vRA Cloud Template I need to build a Custom Resource that represents script execution.

A Custom Resource needs to be backed by a vRO inventory object (i.e. not a string, number etc) so the workflows produced for this purpose leverage Dynamic Types.

Note that as there is no API that vRO can use for the “find by id” workflows a config element is used within vRO to store script executions which the “find by id” workflows can query whenever vRA needs to locate an object.

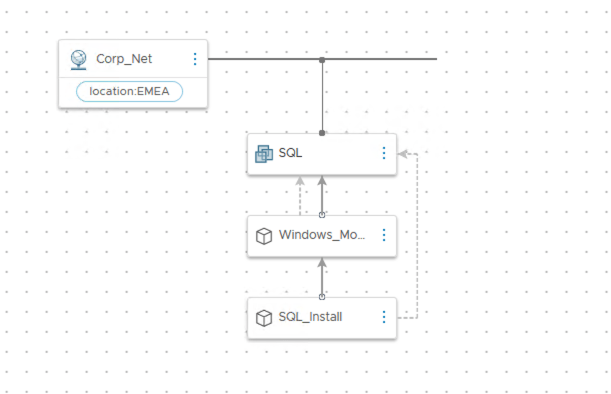

Once the Custom Resource is activated it can be dropped onto a Cloud Template just like any other component or integration that needs to be used. The Cloud Template below represents a single component VM that is using the Custom Resource to run scripts for configuring Windows OS modules and for installing SQL 2016.

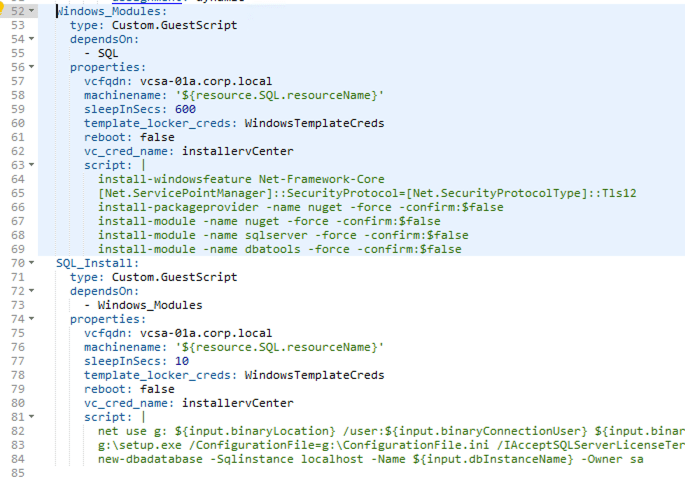

In this instance I have used 2 script components to achieve my goal as vRO will timeout if the script commands being executed exceed 20 minutes before completing. My demo environment is nested and doesn’t have a huge amount of performance!

My first script component installs all the Windows prerequisites including PowerShell modules that will enable me to control SQL once it has been installed. The second script component is responsible for running an unattended SQL installation over the network from an SMB share (where both the SQL binaries and the unattended install file are located) as well as creating a default database using the PowerShell module installed previously.

Once a deployment has been spun up from the Cloud Template the script components are then represented as components on the deployment. This means that you could create Day2 actions against the SQL install, for example adding a new database or creating a new database user.

I hope this has given you an insight into how powerful this functionality can be. Of course it is no substitute for a full blown system such as Ansible for deploying configuration but it allows you to provide advanced functionality with minimal overhead and tooling and even open up the option of allow users to execute their own script packages during request time if you map in some user inputs.