A few years ago an internal side project within VMware generated a mechanism to trigger calls to other VMware and 3rd party products from vRealize Log Insight and vRealize Operations that were not natively supported within either product. This was known as Webhook Shims and still exists to this day on Github (https://github.com/vmw-loginsight/webhook-shims).

Recently one of my colleagues asked me to help out enabling vRLI calling vRO workflows for a particular event seen in the logs. Webhook Shims is of course still the obvious choice for this so in this article I am going to cover how to get this up and running.

How Does It Work

Essentially the implementation is a Flask WSGI framework (Web Services Gateway Interface) that allows you to make REST API calls to it and then maps those REST calls to Python functions. In this case the implementation has a number of Python functions for a wide variety of endpoints including:

- Big Panda

- Bugzilla

- Groove

- Hip Chat

- Jenkins

- Jira

- Kafka Topic

- Moogsoft

- MS Teams

- Ops Genie

- Pager Duty

- Pivotal Tracker

- Push Bullet

- ServiceNow

- Slack

- Socialcast

- vRealize Orchestrator

- ZenDesk

Each set of functions for a given endpoint are held in a separate Python file for that endpoint type. The endpoint file dictates the application routes that are allowed as well as the REST API methods that can be used (i.e. “PUT”, “POST” etc.).

Prerequisites – Hosting Machine

Before I dive into configuration there are a couple of things that need to be in place, specifically a Linux machine to host the webhook shims on which has a valid IP address and a means to install any required packages.

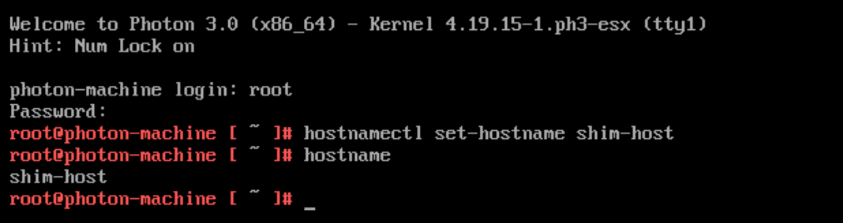

In this example I am going to be using the latest version of VMware Photon OS (3.0) however you could use any Linux distribution you are comfortable with. To get Photon OS 3 setup I’m going to assign a new hostname and a static IP address to an instance deployed straight from OVA into vSphere.

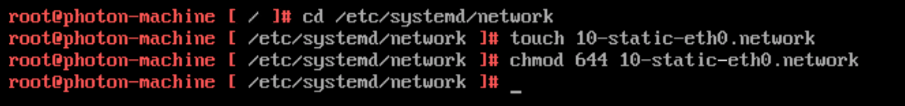

To get a static IP running in Photon I need to create a new network configuration file for “systemd” to use with the appropriate file permissions.

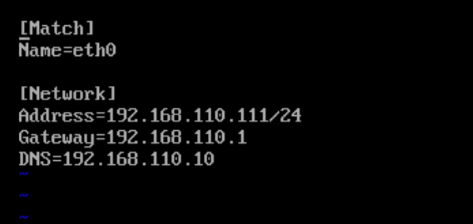

The format of the new file can be copied from the existing DHCP file however it will not contain all of the required attributes. This is my basic static file.

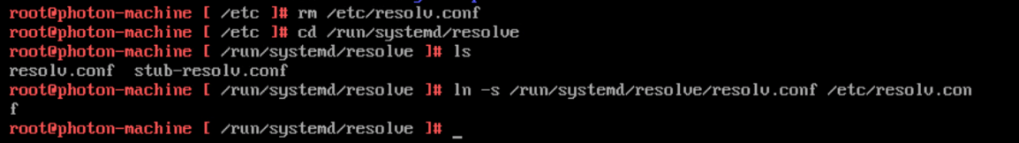

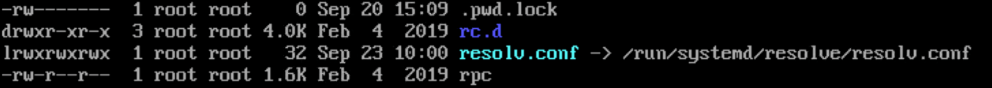

For DNS to work the symbolic link for resolv.conf needs to be re-created. For OS 3.0 this link points to the stub-resolv.conf file which does not get updated when the DNS IP address is added to the network configuration file above.

A couple of quick test verifies that DNS is now fully functional and the static IP address has been assigned to the interface.

Prerequisites – Packages

For this installation I am going to install everything to my server. There is an alternate method to run the shims as a docker container (“docker run -it -p 5001:5001 vmware/webhook-shims’) if you want to do it that way.

Now that my shim host is up and running I need to get a number of packages installed which Webhook Shims requires in order to run. These include:

- wget

- pip

- python2

- python-xml

- virtualenv

- git

Photon uses a package manager similar to YUM called “tdnf” (Tiny Dandified Yum) to install and manage software packages. It reads and uses YUM repositories however if you want to use YUM rather than tdnf you can use tdnf to install YUM ;-).

Anyway, here I am going to use tdnf for everything I need.

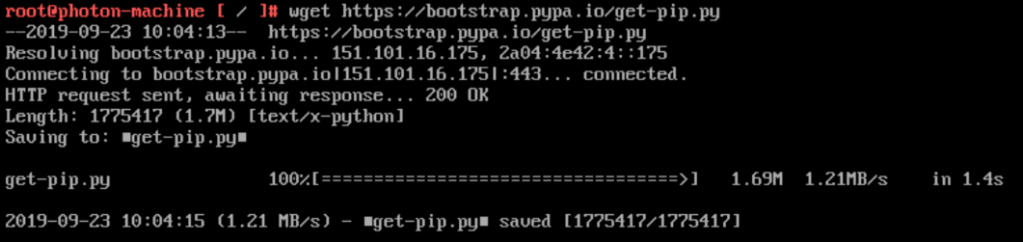

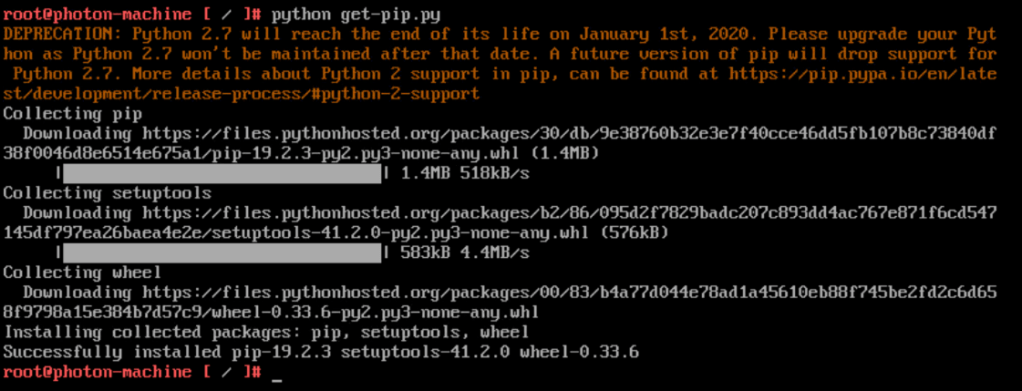

PIP is installed via a Python script which is pulled down using “wget”.

Once PIP is available it is used to install “virtualenv”. This provides the ability to create isolated Python partitions on the same machine which share a global configuration. This is not strictly needed if the machine is only going to run a single application.

Installing Webhook Shims

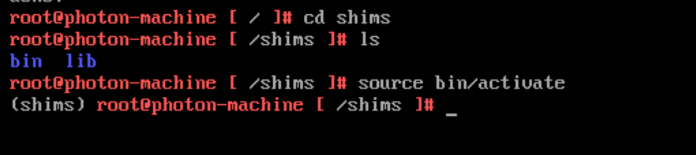

As I am using “virtualenv” the first thing I need to do is to create a new environment. I’m using the name “shims” for this but you can use anything you want, as long as it is unique on the machine.

Note that the environment directory will be created at whichever point you are in the file system. In my case I am running the command at “/”.

Once the environment is created I can change to the directory and see the contents (library files for the Python environment). To run the environment the activate script needs to be run using “source path_to_activate_script”. The name of the environment that has been activated is then added to each shell prompt.

To install Webhook Shims I need to clone the git repository that hosts the files on github.com (https://github.com/vmw-loginsight/webhook-shims.git).

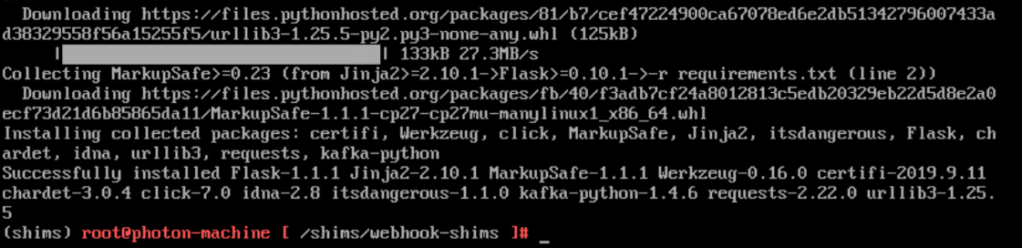

The flask implementation uses Python and therefore a requirements.txt file dictates the packages that must be in place (located in the “webhook-shims” directory). To verify that the required packages are in place you can run PIP against the requirements.txt file (“PIP install -r requirements.txt”). Anything that is not there will be pulled down and installed as necessary.

Finally a firewall rule for iptables can be added to make sure that traffic to the gateway makes it to the application.

Configuring Webhook Shims

My use case is purely for calling workflows via vRLI so I am not going to cover every single integration. All valid endpoint types have a distinct Python file which you will see if you perform a directory listing of “YOUR_ENV/webhook-shims/loginsightwebhookdemo”.

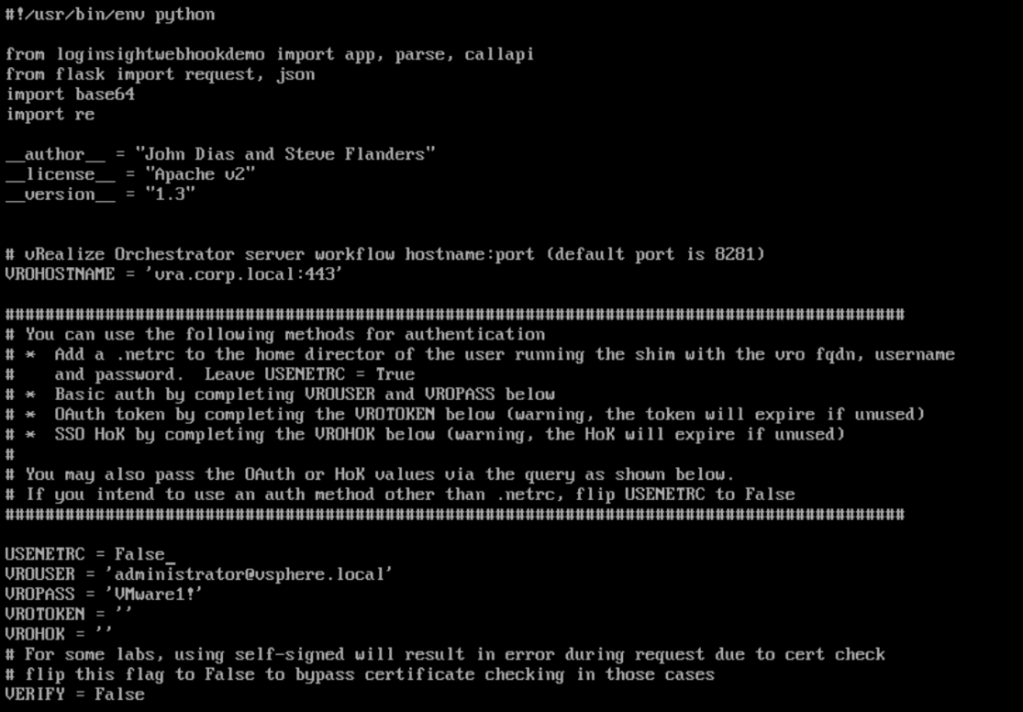

To get the integration working between the gateway and vRO I need to edit the Python file. It contains a number of configuration attributes such as the hostname and authentication options. Here you can see my updated file where I am using basic authentication only. I have elected to add the auth details in the file however I could have also used as .netrc file to hold these details.

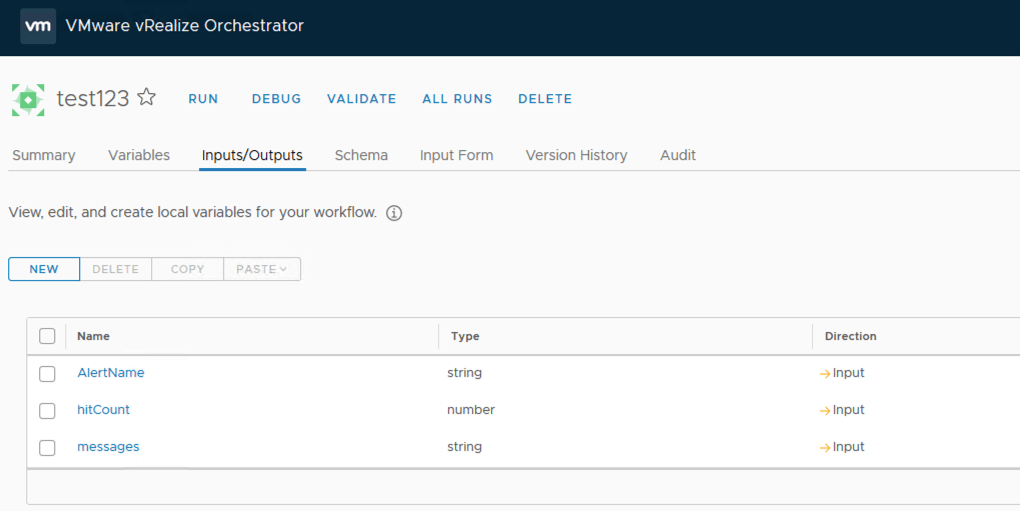

Functions further down the file dictate the inputs that the implementation expects a called vRO workflow to have. Note that these inputs MUST match any workflow you want to call otherwise an error code will be returned to the gateway and ultimately the calling application (vRLI in this case).

The first part of each parameter covers where the value of the parameter is coming from with the second part defining the name and type of workflow input.

Starting Webhook-Shims

The shims implementation is started by running the “runserver.py” Python file, specifying the port number to run on. The application will log to the screen displaying each call that comes in.

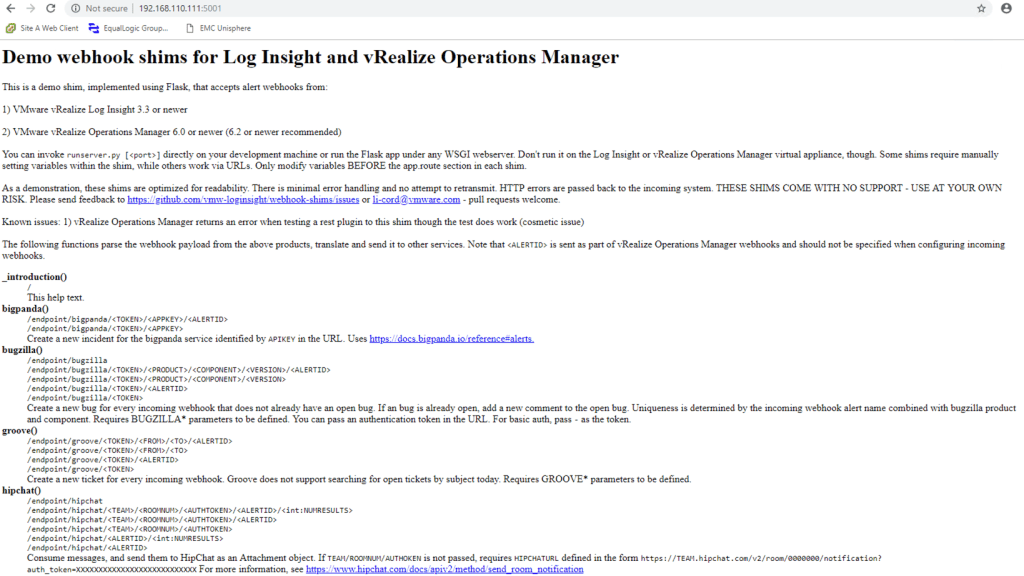

To test the gateway is functioning you can execute a web call against the IP or hostname with the correct port number. A list of endpoint types and supported calls should be returned if all is functioning as shown below.

The Workflow

To test connectivity between the shim and vRO I am going to create a test workflow for the shim to call. The inputs will match those specified in the vrealizeorchestrator.py file on the shim server.

Workflows are executed by workflow ID rather than name as this is how the vRO REST API is written (workflow names are not guaranteed to be unique). The workflow ID can be seen on the summary page of each workflow however it cannot be copied. The “version history” tab of a workflow will allow you to locate the ID field and copy it from the differences text.

Here I am using the “RESTClient” extension for Mozilla to send a REST “POST” call to the shim. The workflow ID is passed as part of the URL. The shim expects any call it receives to have 2 inputs within the call payload. For this test I have added them to the test REST call.

The call can be tracked on the shim server log from receiving the call through to executing the vRO workflow. In this instance a “202” response was received from vRO which indicates the call was successfully made.

The workflow execution log backs this up.

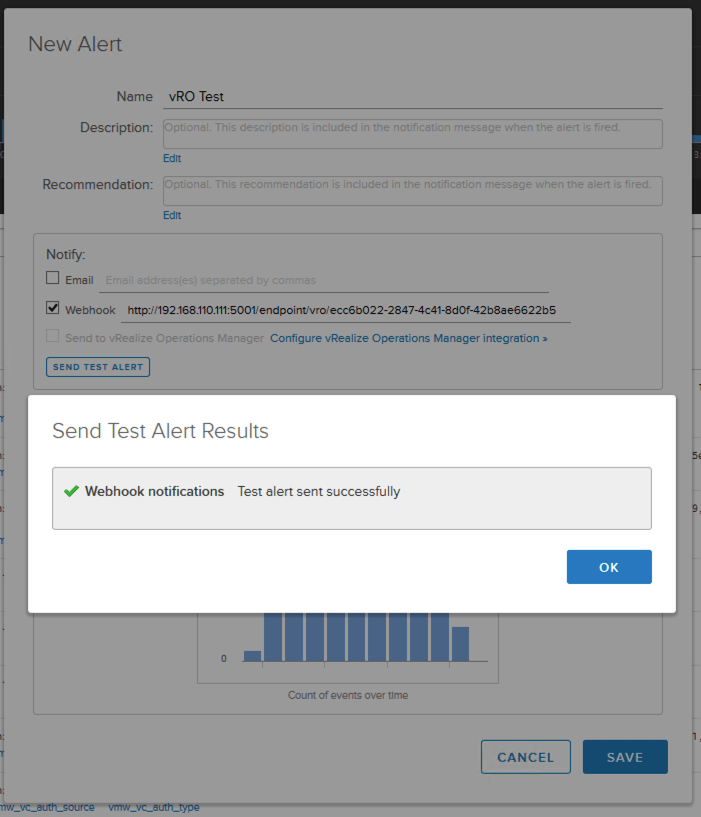

Integrating Log Insight

The final part of the puzzle is to configure vRLI to fire the call to the shim server. Once you have created a query within vRLI that identifies the log entries you want then an alert can be created from that. This requires the same URL as the RESTClient test however you do not have to define the method as this is dictated by the calling application.

Remember this shim is not officially maintained by VMware and there is no guarantee it will continue working with future product versions.

Pingback: Newsletter: September 28, 2019 – Notes from MWhite