Overview

In this series of articles I’m going to cover getting Kubernetes (with docker) and NSX-T stood up from scratch to that point where new name spaces and applications can be deployed with automated network constructs in NSX-T.

This is a series of blog articles I have wanted to write for some time now but getting enough time and resource to plan, test and write them is always the issue. A lull for VMworld is the perfect opportunity so it’s time to get started!

I should call out I am a Kubernetes novice but love throwing myself into unfamiliar technology to expand my skill sets. After all, it can’t be that hard…

This series of articles includes:

- Part 1 – Deploying Container Hosts

- Part 2 – Deploying Docker & Kubernetes

- Part 3 – Creating a Kubernetes Cluster

- Part 4 – Configuring NSX-T Ready for NCP

- Part 5 – Deploying and Configuring NCP for NSX-T

- Part 6 – Testing the Platform

High Level Layout

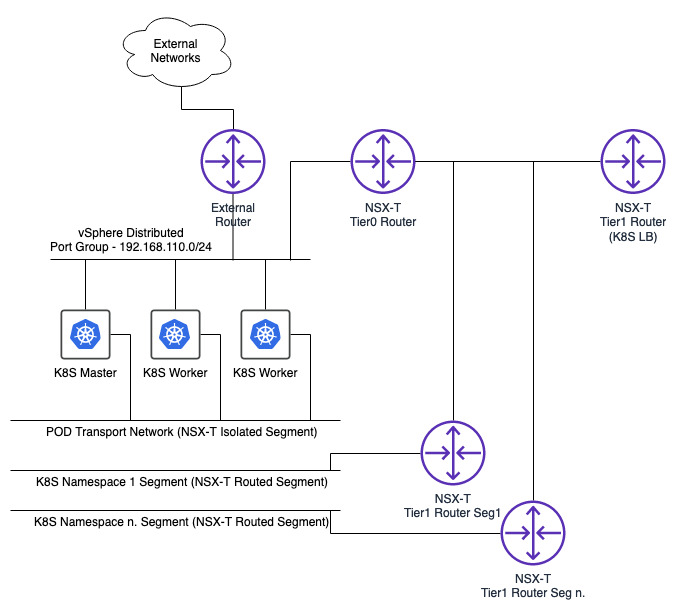

The following simple diagram should give you an idea of what I am trying to build.

I will be using an existing vSphere distributed portgroup for API access to Kubernetes and docker host management (you could also use an NSX-T segment for this and benefit from micro-segmentation etc.) with a second interface on each of the 3 container/Kubernetes VMs connected to a transport network for inter-pod communications.

Communications from containers to the outside world (and vice versa) will be via container ports that are connected to the relevant namespace NSX-T segments and linked to the virtual interfaces of the container host virtual machines.

Container Host OS

I am going to be using CentOS 7.6 for this article as I have a suitable CentOS template already built and also because every other article I find uses Ubuntu. I like to be different! It’s also important that you validate your chosen OS type and version with the VMware documentation as we require the OS, Kubernetes and the NSX-T Network Container Plugin to all work together.

The compatibility information for the VMware NCP can be found within the NCP documentation (not in the interoperability matrices). This screenshot relates to the current NCP version (at the time of writing) which is 2.5.0.

The link to this documentation is:

Using the documentation I can verify that my version of NSX-T (2.5.0) is correct as is vSphere (6.7 U3) and my Container OS (CentOS 7.6 in my case). I can also identify the latest version of Kubernetes I can use (1.14 here).

My CentOS build has a user called “admin” defined (belonging to groups “admin” and “wheel”) and the root account password unset to prevent it being logged on with.

Container Host Spec

For this series of articles I am going to be using 3 virtual machines that will function as Container/Kubernetes hosts. One will be the Kubernetes Master and the other 2 will be Kubernetes worker nodes. Each host will have 2vCPU, 8GB of RAM with a 40GB disk however for a production environment you may want to scale this upwards and/or increase the number of container host VMs.

Building the Container Hosts

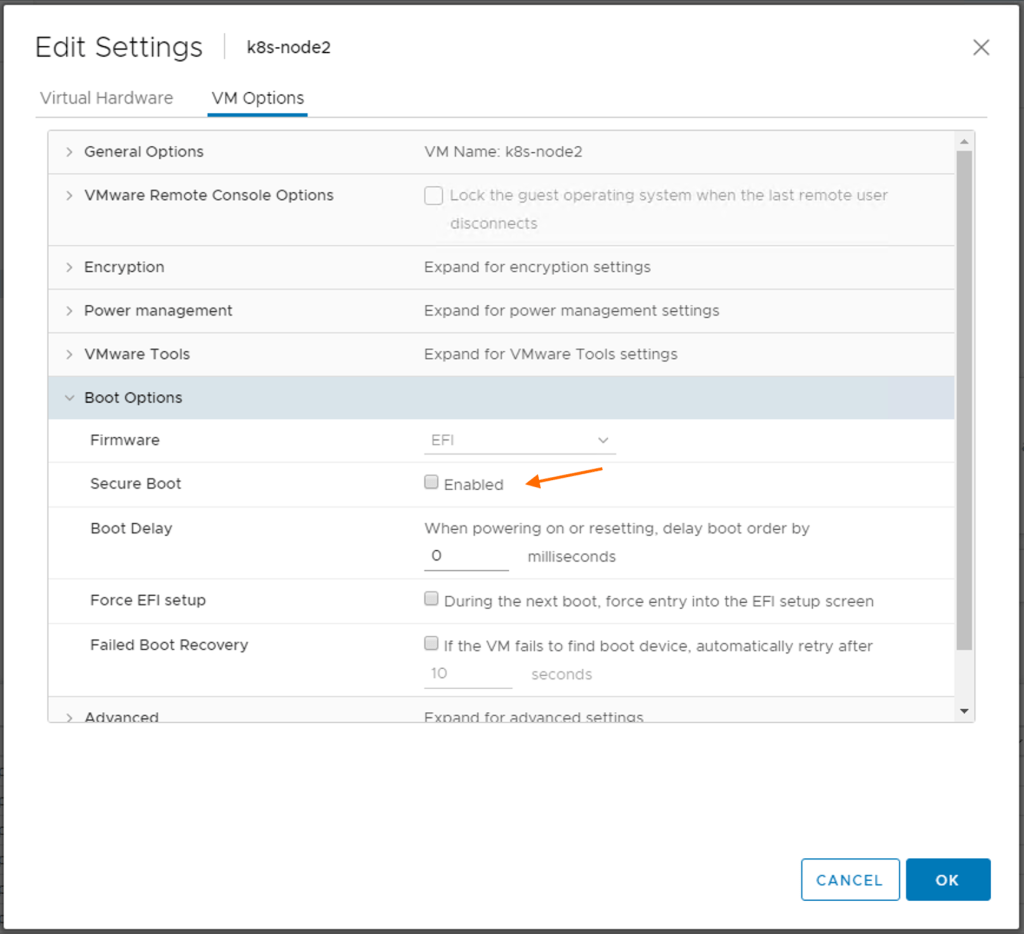

Before I even power on the container hosts I am going to disable secure boot within vSphere for each machine. Testing multiple scenarios using NCP 2.4.0 showed that Open vSwitch services did not start successfully if secure boot was enabled. I have re-tested this for NCP 2.5.0 and despite Open vSwitch now residing within a container user space commands to scale, deploy and delete deployments fail with NetworkPlugin cni errors if SecureBoot is enabled.

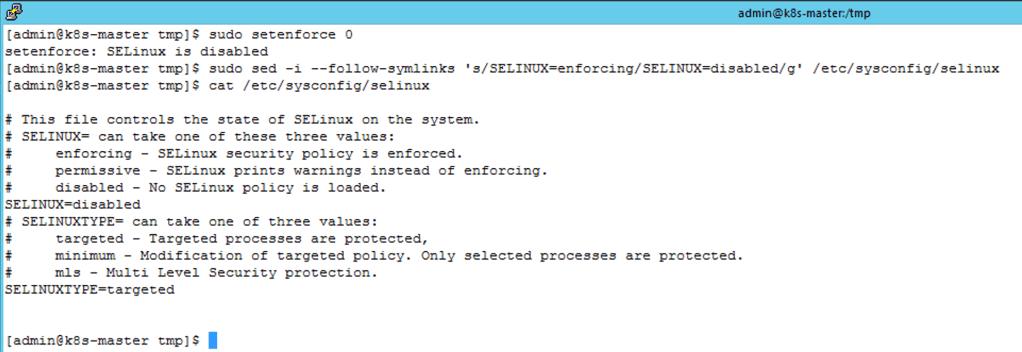

The next step is to disable SELinux. This is due to some library files that docker uses being falsely identified as a threat by SELinux which then prevents dock from running. To do this you can disable the current session and for every subsequent reboot as follows.

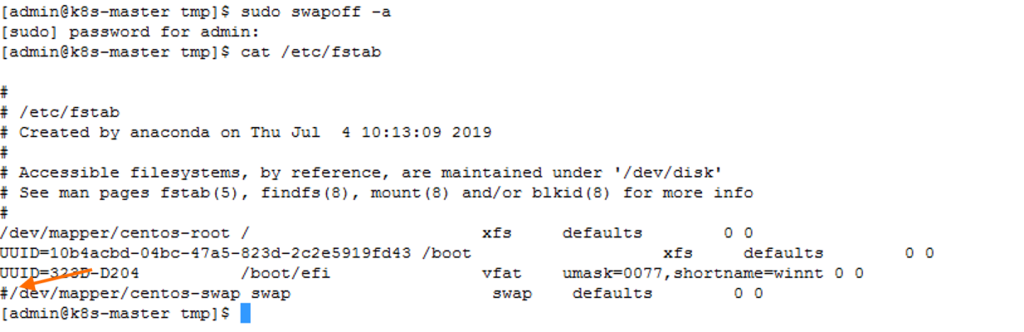

The swapfile for each host also needs to be disabled. This is a requirement from Kubernetes which can also be done as a one-off command for the current run-time and by permanently changing the /etc/fstab file. Here you can see I have commented out the line for the swap file.

The last part of the basic OS configuration is to load the bridge network filter kernel module.

sudo modprobe br_netfilter

In the next part of this series I’ll be looking at deploying docker and Kubernetes onto the hosts.

Pingback: Kubernetes and NSX-T – Part 2 Deploying Docker and Kubernetes | vnuggets

Pingback: Kubernetes and NSX-T – Part 3 Creating a Kubernetes Cluster | vnuggets

Pingback: Kubernetes and NSX-T – Part 4 Configuring NSX-T Ready for NCP | vnuggets

Pingback: Kubernetes and NSX-T – Part 5 Deploying and Configuring NCP for NSX-T | vnuggets

Pingback: Kubernetes and NSX-T – Part 6 Testing The Platform | vnuggets