Overview

Welcome to the third part of this series of articles covering Kubernetes and NSX-T. So far in this series I have deployed some hosts to be used for containers and Kubernetes with CentOS 7.6, prepped them for use and installed Docker and Kubernetes. In this part I am going to walk through creating a Kubernetes cluster.

This series of articles includes:

- Part 1 – Deploying Container Hosts

- Part 2 – Deploying Docker & Kubernetes

- Part 3 – Creating a Kubernetes Cluster

- Part 4 – Configuring NSX-T Ready for NCP

- Part 5 – Deploying and Configuring NCP for NSX-T

- Part 6 – Testing the Platform

Open vSwitch

In previous iterations of the VMware Network Container Plugin (NCP) Open vSwitch was required to be installed on each container host server (in my case all 3 CentOS VMs) and configured to create a bridge interface that was linked to the cluster network interfaces (i.e. the interface designated for the POD transport network in my architecture diagram in part 1). With NCP 2.5.0 this is not required as Open vSwitch is deployed into a container (named “nsx-ovs”) within the NSX Node Agent Kubernetes POD on each host. This makes things a lot simpler!

Initializing the Cluster

Creating a Kubernetes cluster is driven not only from the MASTER node but also each worker node. The first part is to initialize the cluster from the MASTER appliance, specifying the IP address that the API will be available on (this is the routable IP address of the MASTER CentOS VM connected to my distributed port group).

Note in this example I am running the initialization as root however you can equally do it using sudo if logged in as another user.

Once the initialization has completed the output will provide commands that will need to be run (some on the MASTER and the others on the worker nodes) which can be copied and pasted as appropriate.

Creating User Home Directory Configuration

Management plane commands are issued by non-root user accounts. In order for any non-root account to be able to issue Kubernetes commands the account in question needs to have an environment configuration file created within the home directory that specifies how the Kubernetes cluster is configured (port numbers etc.).

To complete this procedure you simply need to create a hidden directory (“.kube”) beneath the users home directory and copy “/etc/kubernetes/admin.conf” to a file called /home/<INSERT_USER_HERE>/.kube/config. The permissions also need to be set so that the user owns the file from a user and group perspective. The commands to complete this are also listed in the output of the cluster initialization command (see screenshot above).

Joining Worker Nodes to the Cluster

Each Kubernetes worker node now needs to be joined to the initialized cluster. This is an operation you must run from each worker nodes shell in turn. The command to do this join operation is specific to each cluster as it requires a generated certificate token to be specified which is created when the cluster is initialized. The full command is also outputted from the cluster initialization allowing you to just copy and paste it from master to worker as required.

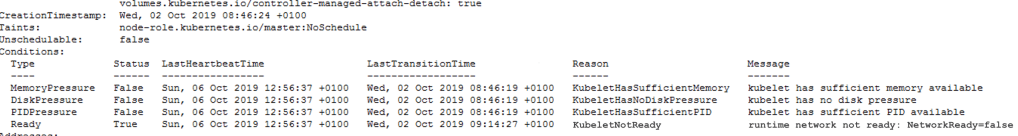

Once all worker nodes have been joined to the cluster I should be able to verify the status of the cluster. The expectation here is that I can see a cluster made up of 3 members using “kubectl get nodes”. Here you can see that my cluster does indeed have 3 nodes but all of them are marked as “Not Ready”.

So there’s more to do. If I use “kubectl describe nodes” then I can get more information about each node. The output from the describe command includes a message that shows the network configuration is not complete. This message is repeated for each of my 3 machines.

The reason for the “NotReady” status is related to the fact that I have not yet configured the Network Container Plugin (NCP). I will cover this once I have NSX-T configured which will be the topic of the next article.

Pingback: Kubernetes and NSX-T – Part 1 Deploying Container Hosts | vnuggets

Pingback: Kubernetes and NSX-T – Part 2 Deploying Docker and Kubernetes | vnuggets

Pingback: Kubernetes and NSX-T – Part 4 Configuring NSX-T Ready for NCP | vnuggets

Pingback: Kubernetes and NSX-T – Part 5 Deploying and Configuring NCP for NSX-T | vnuggets

Pingback: Kubernetes and NSX-T – Part 6 Testing The Platform | vnuggets